Self-Supervised Convolutional Subspace Clustering Network

Abstract

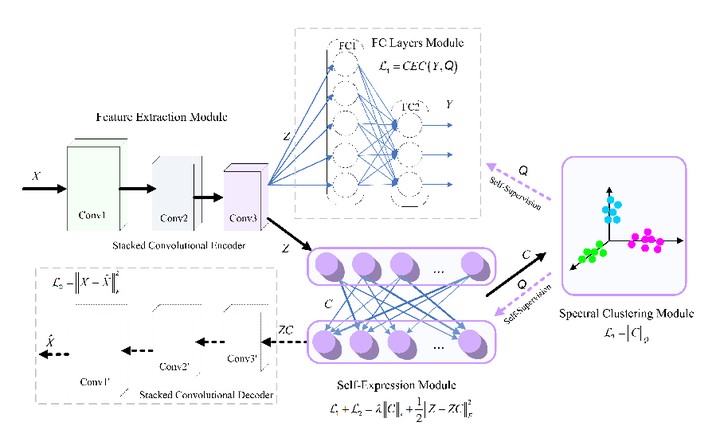

Subspace clustering methods based on data self-expressiveness have become very popular for learning from data that lie in a union of low-dimensional linear subspaces. However, in the absence of proper feature extraction, the applicability of subspace clustering has been restricted because practical visual data in raw form do not necessarily lie in such linear structures. On the other hand, while Convolutional Neural Network (ConvNet) has been demonstrated as a powerful tool for feature extraction from visual data, training such a ConvNet usually requires a large amount of labeled data, which are unavailable in subspace clustering applications. To achieve simultaneously feature learning and subspace clustering, we propose an end-to-end trainable framework called the Self-Supervised Convolutional Subspace Clustering Network (S2ConvSCN) that combines a ConvNet module (for feature learning), a self-expression module (for subspace clustering) and a spectral clustering module (for self-supervision) into a joint optimization framework. Particularly, we introduce a dual self-supervision that exploits the output of spectral clustering to supervise the training of the feature learning module (via a classification loss) and the self-expression module (via a spectral clustering loss). Our experiments on four benchmark datasets show the effectiveness of the dual self-supervision and demonstrate superior performance of our proposed approach.