Classification via Semi-Riemannian Spaces

Abstract

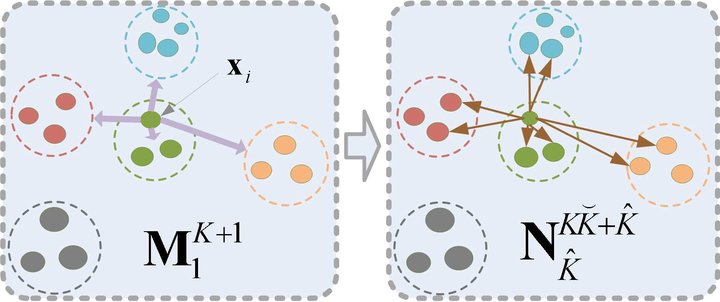

In this paper, we develop a geometric framework for linear or nonlinear discriminant subspace learning and classification. In our framework, the structures of classes are conceptualized as a semi-Riemannian manifold which is considered as a submanifold embedded in an ambient semi-Riemannian space. The class structures of original samples can be characterized and deformed by local metrics of the semi-Riemannian space. Semi-Riemannian metrics are uniquely determined by the smoothing of discrete functions and the nullity of the semi-Riemannian space. Based on the geometrization of class structures, optimizing class structures in the feature space is equivalent to maximizing the quadratic quantities of metric tensors in the semi-Riemannian space. Thus supervised discriminant subspace learning reduces to unsupervised semi-Riemannian manifold learning. Based on the proposed framework, a novel algorithm, dubbed as semi-Riemannian discriminant analysis (SRDA), is presented for subspace-based classification. The performance of SRDA is tested on face recognition (singular case) and handwritten capital letter classification (nonsingular case) against existing algorithms. The experimental results show that SRDA works well on recognition and classification, implying that semi-Riemannian geometry is a promising new tool for pattern recognition and machine learning.