Construction of Incoherent Dictionaries via Direct Babel Function Minimization

Abstract

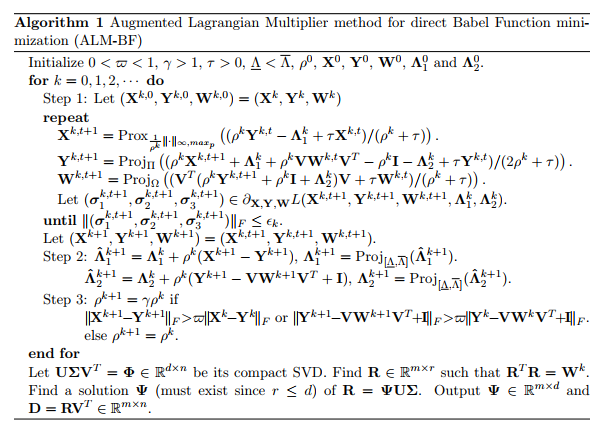

Highly incoherent dictionaries have broad applications in machine learning. Minimizing the mutual coherence is a common intuition to construct incoherent dictionaries in the previous methods. However, as pointed out by Tropp (2004), mutual coherence does not offer a very subtle description and Babel function, as a generalization of mutual coherence, is a more attractive alternative. However, it is much more challenging to optimize. In this work, we minimize the Babel function directly to construct incoherent dictionaries. As far as we know, this is the first work to optimize the Babel function. We propose an augmented Lagrange multiplier based algorithm to solve this nonconvex and nonsmooth problem with the convergence guarantee that every accumulation point is a KKT point. We define a new norm |X|_{\infty,maxp} and propose an efficient method to compute its proximal operation with O(n^2 logn) complexity, which dominates the running time of our algorithm, where max_p means the sum of the largest p elements and n is the number of the atoms. Numerical experiments testify to the advantage of our method